Keeping a log is essential when it comes to software development. It helps developers identify issues and debug them quickly. However, logging can be a daunting task, especially when you have numerous files to log. Inefficient logging can result in memory problems and performance issues, which can ultimately affect user experience. Fortunately, there is an effective way to log data without any hassle: logging to dual files with unique configurations.

If you’re someone who always struggles with logging, this article is for you! It will teach you how to configure your logs to dual files, which can simplify the logging process and provide better performance. We’ll discuss the benefits of using this technique and provide a step-by-step guide on how to implement it. This article will help you create efficient logging in no time.

If you want to take your logging game to the next level, you should certainly read this article until the end. You’ll learn how to reduce the overall complexity of logging and ensure that your logs are stored effectively. This will not only improve your productivity but will also enhance the user experience of your application. So, what are you waiting for? Dive into this article and discover how to implement efficient logging to dual files with unique configurations.

“Logging To Two Files With Different Settings” ~ bbaz

Introduction

Logging is an essential part of software development. It helps developers to identify, debug and fix issues in their code. When it comes to logging, especially in a distributed environment, there are several challenges that need to be addressed. One of those challenges is efficient logging to dual files with unique configurations. In this article, we will compare different approaches to achieve efficient logging to dual files with unique configurations.

The Challenge

In a distributed environment, it is common to have multiple instances of the same application running on different machines. Each instance may have some unique configurations and requirements for logging. For example, one instance may require more detailed logs for debugging purposes, while another instance may require only high-level logs for monitoring.

Approach 1: Centralized Log Management

Centralized log management is a common approach to address logging challenges in a distributed environment. In this approach, all the logs from different instances of the application are sent to a centralized logging server. The logging server then filters and sorts the logs according to the unique configurations of each instance.

Pros:

- Centralized log management provides a single point of control for logging, making it easier to manage and analyze logs.

- It allows filtering and sorting of logs at a centralized location, reducing the load on individual instances of the application.

Cons:

- Centralized log management can become a bottleneck if the logging server is not able to handle the volume of logs generated by multiple instances of the application.

- It requires additional infrastructure and maintenance costs for setting up and managing the logging server.

Approach 2: Logging Libraries

Logging libraries such as Log4Net, NLog or Serilog provide a flexible and configurable logging solution for applications. These libraries allow developers to define unique configurations for each instance of the application based on the environment, machine or other parameters.

Pros:

- Logging libraries provide a lightweight and flexible solution for logging.

- Developers have full control over the logging configurations for each instance of the application.

Cons:

- Configuring logging libraries can be time-consuming and error-prone.

- Logging libraries require integration with the application code, which can add complexity and maintenance costs.

Approach 3: Docker Logging Driver

Docker provides a logging driver that allows developers to configure different logging drivers for different instances of the application running in separate containers. The logging driver configuration is defined in the Docker Compose file or in the Docker Swarm configuration.

Pros:

- The Docker logging driver provides a simple and efficient way to manage logging for applications running in separate containers.

- Developers can define unique logging configurations for each container without modifying the application code.

Cons:

- The Docker logging driver may not be suitable for all types of applications or environments.

- It requires additional configuration and management of Docker Swarm or Compose files.

Comparison Table

| Approach | Pros | Cons |

|---|---|---|

| Centralized Log Management | Single point of control, filtering and sorting of logs | Potential bottleneck, additional costs |

| Logging Libraries | Flexible and configurable, full control over logging configurations | Time-consuming configuration, integration with application code |

| Docker Logging Driver | Simple and efficient, unique logging configurations for each container | May not be suitable for all environments, additional configuration |

Conclusion

Efficient logging to dual files with unique configurations is a challenge in a distributed environment. Each approach has its own pros and cons, and the choice depends on the specific requirements of the application and the environment. Centralized log management provides a single point of control, but it requires additional infrastructure and can become a bottleneck. Logging libraries provide flexibility and control, but they require integration with the application code. The Docker logging driver provides a simple and efficient solution for logging in separate containers, but it may not be suitable for all environments. To choose the best approach, developers should consider the needs of their application and the resources available for logging.

Thank you for taking the time to read about Efficient Logging to Dual Files with Unique Configurations. We hope that the information provided has been helpful in your quest to improve your logging practices. As we have discussed, using dual files and unique configurations can greatly enhance the efficiency of your logging, saving you time and resources in the long run.

Remember, the key to efficient logging is to plan ahead and identify what information needs to be logged, and how often. This will ensure that your logs are accurately capturing the necessary data without becoming overly cumbersome. Additionally, utilizing automated tools such as log analyzers can help simplify the logging process even further, freeing up valuable time for other important tasks.

We urge you to continue exploring the many benefits of efficient logging, and to share your successes and challenges with others in the logging community. By working together and sharing best practices, we can all improve our logging practices and promote a more streamlined, effective approach to data collection and analysis. Thank you again for your time and your commitment to excellence in logging.

People Also Ask About Efficient Logging to Dual Files with Unique Configurations

Efficient logging to dual files with unique configurations is a critical aspect of software development. Here are some common questions people ask about this topic, along with their answers:

- What is efficient logging to dual files with unique configurations?

- Why is efficient logging important?

- How can I configure dual logging?

- What are some best practices for efficient logging?

Efficient logging to dual files with unique configurations is the process of creating and managing log files that contain specific data for different purposes. This means that developers can easily track and debug issues in their code by viewing logs that are tailored to their needs.

Efficient logging is important because it helps developers identify and fix issues in their code more quickly. With well-organized logs, developers can easily pinpoint the root cause of a problem and take corrective action.

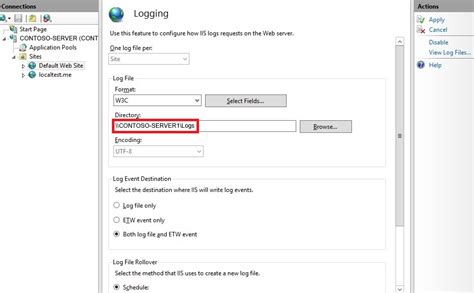

Configuring dual logging involves setting up two separate log files that contain specific data for different purposes. Developers can use tools like Log4j or Logback to configure dual logging in their applications.

- Limit the amount of information logged to avoid cluttering the log files.

- Use log levels to categorize the severity of logged events.

- Include timestamps in log entries for better analysis.

- Rotate log files regularly to avoid filling up disk space.

Developers can use tools like Splunk or ELK stack to analyze log data. These tools provide powerful search and visualization capabilities that make it easy to identify patterns and trends in log data.