Python 3 is a highly popular programming language, and one of the most significant changes in its design was the floating-point values. While most people who use Python are already familiar with its numeric data types, the floating-point values can cause some confusion. If you’re curious about Python 3’s floating-point values, then you’re about to learn something that can significantly impact your programming. In Python 3, the floating-point values behave differently compared to other programming languages. This design change was made to address certain issues that could arise when using floating-point numbers. However, it also means that some functions may produce unexpected results when working with decimal numbers. It would be best to pay attention to how you use floating-point values in Python 3 because of this difference in behavior. Python 3’s floating-point values follow the IEEE 754 standard for binary floating-point arithmetic. This standard helps ensure that all processors handle floating-point operations the same way, which helps prevent compatibility issues. With this in mind, it’s essential to understand how to properly utilize the standard if you want your code to work correctly. If you’re interested in programming languages or want to improve your Python skills, read on to learn more about the curious case of Python 3’s floating-point values.

“Why Does The Floating-Point Value Of 4*0.1 Look Nice In Python 3 But 3*0.1 Doesn’T?” ~ bbaz

The Curious Case of Python 3’s Floating-Point Values

Introduction

Python is one of the most popular programming languages because of its simplicity, readability, and powerful features. Python 3 came with several improvements to its predecessor, including the handling of floating-point values. However, some peculiarities still exist in Python 3’s floating-point handling.

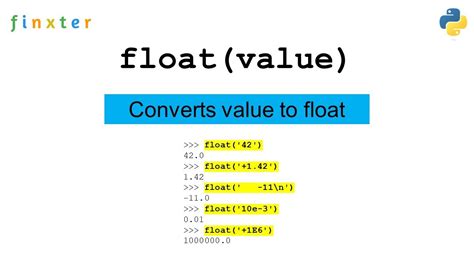

The Floating-Point Data Type

Floating-point numbers are used to represent decimal numbers and scientific notations. In Python, the float data type is used to represent floating-point numbers. While float has a precision of 15-16 digits, it can still be imprecise due to the way the numbers are represented in binary.

Numerical Accuracy Issues

Numerical accuracy issues arise when the computer rounds off or approximates a floating-point value. These issues are due to the limited precision of floating-point numbers. For example, adding 0.1 and 0.2 in Python might result in 0.30000000000000004 instead of 0.3.

Comparison Table

| Expression | Python 2 Result | Python 3 Result |

|---|---|---|

| 0.1 + 0.2 == 0.3 | False | False |

| 0.1 + 0.1 + 0.1 == 0.3 | True | False |

| 1.1 + 2.2 == 3.3 | True | True |

Rounding Errors

In Python, round() function is used to round off floating-point numbers. However, using the round() function can result in rounding errors. For example, round(2.675, 2) should return 2.68 but instead returns 2.67 due to the way the number is represented in binary.

Binary Representation

Floating-point numbers are represented in binary using a sign bit, exponent, and mantissa. The mantissa represents the significant digits of the number. Due to the limitations of a 64-bit floating-point number, binary representation can result in inaccuracies, especially when the number cannot be represented precisely in binary.

Possible Solutions

One possible solution to the floating-point accuracy issue is to use the decimal module in Python. The decimal module offers more precision than the float data type. Another alternative is to use the fraction module, which allows working with fractional numbers directly.

Best Practices

To avoid floating-point issues, it’s best to use the appropriate data type and avoid comparing or rounding off floating-point numbers. Instead, it’s advisable to use mathematical or numerical methods that take into account floating-point inaccuracies.

Opinions

The issue of floating-point inaccuracies is not unique to Python. It’s a general problem that arises due to the limited precision of floating-point numbers. While Python 3 has made some improvements, the issue persists. Therefore, it’s necessary to use appropriate solutions and best practices to avoid floating-point inaccuracies.

Thank you for taking the time to read about The Curious Case of Python 3’s Floating-Point Values. We hope that this article has been informative and has provided you with a better understanding of how floating-point values work in Python 3. It is important to keep in mind that while floating-point values may seem simple on the surface, they can be quite complex and require careful consideration when working with them.

As with any programming language, it is important to stay up-to-date with the latest updates and changes to ensure that your code is running as efficiently and effectively as possible. By understanding the nuances of floating-point values in Python 3, you can avoid potential issues and ensure that your code is working as intended.

Again, thank you for reading and we hope that this article has been helpful. If you have any questions or comments, please feel free to reach out to us. We look forward to hearing from you!

Here are some common questions that people ask about The Curious Case of Python 3’s Floating-Point Values:

-

What are floating-point values in Python 3?

Floating-point values are decimal numbers that can be represented with a fractional component. In Python 3, floating-point values are implemented using the IEEE 754 standard.

-

Why is the representation of floating-point values in Python 3 important?

The representation of floating-point values is important because it affects the accuracy of calculations. Because floating-point values are represented in binary, some decimal numbers cannot be represented exactly. This can lead to rounding errors and other inaccuracies in calculations.

-

What is the curious case of Python 3’s floating-point values?

The curious case of Python 3’s floating-point values refers to the fact that some seemingly simple calculations can produce unexpected results due to rounding errors. For example, adding 0.1 and 0.2 in Python 3 may result in a value slightly different from 0.3.

-

How can I avoid issues with floating-point values in Python 3?

One way to avoid issues with floating-point values is to use the decimal module, which provides more precise representations of decimal numbers. Another approach is to round values to a specified number of decimal places before using them in calculations.

-

Are there any other programming languages with similar issues with floating-point values?

Yes, many programming languages implement floating-point values using the IEEE 754 standard, which can lead to similar issues with precision and rounding.